Excel Find Duplicates: A Practical Data Cleanup Guide

Learn how to excel at finding duplicates in Excel with built-in tools, formulas, and Power Query. This practical guide covers highlighting, removing duplicates, and best practices for clean, reliable data.

You will learn how to excel at finding duplicates in Excel using built-in tools like Conditional Formatting, formulas such as COUNTIF, and Power Query, plus guidance on when to remove vs highlight duplicates. This concise guide covers practical examples, cross-column scenarios, and best practices for maintaining clean data. By the end, you'll be able to quickly identify duplicates, decide the right approach, and document your process for teammates.

Understanding Duplicates in Excel

Duplicates in Excel are rows or values that appear more than once within a chosen scope. They can exist in a single column, across multiple columns, or as entire identical rows. The impact of duplicates ranges from skewed analytics to erroneous reporting, especially in customer lists, inventory records, or transaction logs. This section lays the groundwork for why excel find duplicates matters and how different data structures (flat lists vs. relational tables) influence the detection strategy. According to XLS Library, mastering duplicate detection is a foundational skill for data cleaning and governance. By learning to identify duplicates accurately, you reduce confusion, avoid misinterpretation of metrics, and establish a clear path to data integrity. The goal is not only to mark duplicates but to decide whether they should be merged, flagged, or removed, depending on context. In practice, you’ll apply this knowledge to common datasets such as client rosters, order histories, and product catalogs to keep datasets reliable and ready for analysis.

Why Finding Duplicates Is Important

Duplicate records can skew counts, inflate totals, and complicate data analysis. A clean dataset supports reliable insights, reduces manual rework, and speeds up reporting cycles. When you excel at identifying duplicates, you also improve data governance by ensuring consistent key fields (like IDs or emails) are unique where required. The most effective approach combines fast visual checks with precise formulas and scalable techniques like Power Query. For teams, this translates into fewer errors, faster onboarding, and a repeatable process that can be documented and shared. The XLS Library team emphasizes that a structured approach to duplicates saves time and prevents downstream mistakes during data consolidation or joins in analytics workflows.

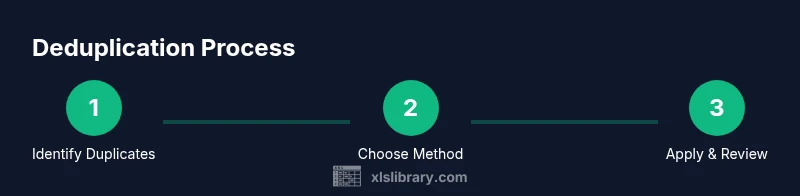

Quick Methods to Find Duplicates at a Glance

There isn’t a single tool that fits every scenario. You’ll typically start with Conditional Formatting to visually flag duplicates, then validate with formulas such as COUNTIF or COUNTIFS, and finally consider Power Query for larger datasets. Each method has trade-offs: visual methods are fast but less scalable; formulas are precise but can become unwieldy; Power Query offers robust, repeatable deduplication for big data pipelines. By combining methods, you create a flexible workflow that adapts to data size, structure, and quality requirements.

Tools & Materials

- Microsoft Excel (Excel 2016 or later recommended)(Ensure you have the latest updates for best performance)

- Data workbook containing duplicates(Include headers for clarity)

- Backup copy of the workbook(Always back up before removing duplicates)

- Power Query (Get & Transform) add-in or built-in in Excel 365/2021(Helpful for large datasets and repeatable workflows)

- A clear plan for the scope of deduplication(Decide which columns constitute a duplicate key)

Steps

Estimated time: 25-40 minutes

- 1

Open your workbook and identify the target data

Launch Excel and locate the sheet containing duplicates. Check headers, verify data types (text vs numbers), and ensure there are no merged cells that could disrupt formulas. This step sets the stage for accurate deduplication and helps you decide which columns will form the key.

Tip: If possible, work on a copy of the data to avoid accidental loss. - 2

Decide the scope: single column or multiple columns

Determine whether duplicates should be detected within one column or across a combination of columns (e.g., ID and date). The scope defines whether to use COUNTIF (single-column) or COUNTIFS (multi-column).

Tip: For multi-column keys, ensure all key fields are correctly aligned by rows. - 3

Choose your primary method

If you need a quick visual, use Conditional Formatting. For precise results, prepare a helper column with a COUNTIF/COUNTIFS formula. For large datasets, plan a Power Query workflow to handle deduplication efficiently.

Tip: Start with a small sample to validate your approach before applying it to the full dataset. - 4

Apply Conditional Formatting to highlight duplicates

Select the target range, go to Home > Conditional Formatting > Highlight Cells Rules > Duplicate Values, choose a formatting color, and apply. This highlights duplicates on-screen without altering data.

Tip: Limit the range to include only relevant columns to avoid over-highlighting. - 5

Set up a helper column with a COUNTIF/COUNTIFS formula

In a new column, enter a formula like =COUNTIF($A$2:$A$100, A2)>1 for single-column duplicates, or =COUNTIFS($A$2:$A$100, A2, $B$2:$B$100, B2)>1 for multi-column duplicates. Copy down to identify duplicates.

Tip: Use absolute references for the range and relative references for the current row cell. - 6

Review results and decide: keep or remove

Examine flagged duplicates and decide which rows to keep. If the dataset contains legitimate repeated records, you might mark them rather than remove. Make a note of any business rules used.

Tip: Filter by the helper column to focus only on duplicates during review. - 7

Remove duplicates (when appropriate)

If duplicates are truly redundant, use Data > Remove Duplicates. Select the columns to consider, choose whether to keep the first occurrence, and confirm. This action modifies the dataset, so ensure backups are available.

Tip: Always preview the results first with a copy to verify the removed rows align with expectations. - 8

Optional: Use Power Query for scalable deduplication

Load the data into Power Query, use the Remove Duplicates feature or Group By to consolidate by key columns, then load back to Excel. This approach scales well for frequent deduplication tasks.

Tip: Power Query preserves the original data transformation steps and can be refreshed with new data.

People Also Ask

What is the difference between removing duplicates and highlighting duplicates in Excel?

Highlighting duplicates uses visual formatting to show where duplicates exist, without changing the data. Removing duplicates deletes duplicate rows based on selected columns. Choose highlighting for review and removal only after you confirm which records should stay.

Highlighting shows where duplicates are, while removing duplicates deletes them. Use highlighting to confirm before cleaning.

Can I find duplicates across several columns at once?

Yes. Use a multi-column key with COUNTIFS to count how many rows share the same combination of values across those columns. Duplicates are flagged when the sum is greater than one for that key.

Yes. Use COUNTIFS with multiple columns to detect duplicates across them.

How do I keep the first occurrence when removing duplicates?

Excel's Remove Duplicates tool keeps the first occurrence by default. You can specify which columns form the key and then remove duplicates. Always pre-check with a backup.

Remove Duplicates keeps the first instance by default. Make sure you know which columns form the key.

Is there a way to find duplicates in a very large dataset without slowing down Excel?

Power Query offers a more scalable approach to finding and removing duplicates for large datasets. It can handle large loads more efficiently than in-workbook formulas, and it preserves a repeatable workflow.

Power Query scales better for big data and keeps a repeatable process.

Can I automate duplicate detection for future imports?

Yes. You can record Power Query steps or write dynamic formulas that adjust with new data. Saving the workflow as a template helps teams reuse the process.

Automate via Power Query steps or dynamic formulas and reuse the workflow template.

Watch Video

The Essentials

- Identify duplicates with purpose: highlight, validate, or remove.

- Choose the right method (visual, formula, or Power Query) based on dataset size.

- Always back up before removing duplicates.

- Document your rules for future audits and teamwork.