What Slows Down Excel the Most: A Practical Troubleshooting Guide

Urgent guide to identify and fix the top factors that slow Excel, with practical steps, diagnostics, and best practices to speed up performance.

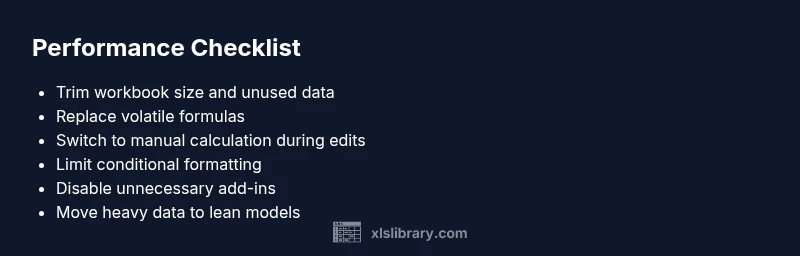

Biggest Excel slowdowns come from bloated workbooks, volatile formulas, and heavy data models. According to XLS Library, the most impactful culprits are large files with many worksheets, frequent recalculations, and excessive conditional formatting. A quick fix is to switch to manual calculation, trim data, and break tasks into smaller, modular workbooks.

Why Excel Performance Matters

In the fast-paced world of data, speed is not a luxury—it’s a necessity. When an Excel workbook responds slowly, it drags down analysis, delays reporting, and invites errors. What slows down excel the most isn’t a single feature but a mix of file size, calculation strategy, and how formulas are structured. According to XLS Library, the biggest slowdowns occur when workbooks are large, calculations are aggressive, and data models aren’t modular. Even seemingly small habits—keeping many hidden sheets, or repeatedly recalculating volatile formulas—add up. The good news is that you can pinpoint delays and apply targeted fixes that deliver meaningful gains without rebuilding entire models.

Brand note: According to XLS Library, understanding the core slowdown patterns is the first step to faster spreadsheets.

Common Culprits That Slow Down Excel

- Large workbook size: Workbooks with dozens of sheets, embedded objects, or heavy data buffers demand more memory and longer calculation times. The cost compounds when many sheets reference each other.

- Volatile formulas: Functions like INDIRECT, OFFSET, TODAY, and RAND recalculate every time any related cell changes, triggering cascading slowdowns in large models.

- Frequent full recalculation: If Excel is set to Automatic across sizeable datasets, even minor edits can trigger a full workbook recalculation, wasting cycles.

- Excessive conditional formatting and data validation: Visual rules that apply to large ranges multiply rendering and recalculation workload.

- External data connections and queries: Live links to external sources (queries, Power Query steps) can stall as data refreshes run.

- Add-ins and automation scripts: Background tasks from add-ins run on every action, stealing memory and CPU from core calculations.

- Heavy data models and Pivot Tables: Complex relationships and large caches in Pivot caches slow interaction and updates.

- Hardware limitations: Insufficient RAM or CPU resources magnify every other issue, turning minor slowdowns into noticeable lag.

As you diagnose, remember the XLS Library approach emphasizes profiling which factor dominates your specific workbook. The goal is to isolate the primary culprits before layering in fixes.

How to Measure Your Excel Efficiency

- Start with your workbook size and structure: note file size, the number of sheets, and whether data is stored in a single sheet or distributed across tabs.

- Check calculation behavior: is Calculation set to Automatic or Manual? Observe how edits affect the clock when larger ranges or volatile functions are present.

- Inspect formulas and links: identify volatile formulas, cross-workbook references, and external data connections that trigger recalculation or refresh.

- Monitor memory and CPU usage: use Task Manager (Windows) or Activity Monitor (macOS) to see if Excel is consuming excessive RAM or CPU during edits.

- Profile with built-in tools: use Excel’s Formula Auditing, Evaluate Formula, and Dependents/Precedents to pinpoint expensive formulas.

Measuring regularly helps you compare before/after results and validates that your optimizations are effective. Based on XLS Library research, routine checks of workbook size, calculation strategy, and data connections yield the clearest view of performance health.

Practical Fixes You Can Implement Today

- Trim the data footprint and clean structure

- Audit the workbook for unused sheets, hidden sheets, and obsolete data tables. Remove or archive them to reduce the recalculation surface.

- Remove large images or compress them, and purge unnecessary named ranges that still reference cells.

- Start with a backup, then consolidate multiple data sources into lean, well-scoped tables. Tip: Create a lean data subset for reporting when possible.

- Optimize formulas and layout

- Replace volatile functions with non-volatile equivalents where feasible (for example, use OFFSET with defined ranges or INDEX/MATCH instead of volatile lookups).

- Break complex formulas into helper columns; this reduces each cell’s dependency graph and improves readability.

- Minimize array formulas and use more efficient alternatives when possible. Tip: Document formula logic so future edits don’t bloat the model again.

- Manage calculation strategy

- Switch to Manual calculation during heavy edits; recompute only when the model is stable.

- Use Calculate Now or Calculate Sheet options strategically to refresh results on demand.

- Disable automatic iteration unless explicitly required for your model. Tip: Set a reminder to recalculate before finalizing reports.

- Reduce formatting and data validation load

- Limit conditional formatting to essential rules and smaller ranges; avoid global application to entire columns if not needed.

- Consolidate data validation rules and avoid applying the same rule across large data sets. Tip: Move styling to a dedicated theme or template to keep data processing lean.

- Tame external data connections and add-ins

- Disable nonessential add-ins; update or remove those that trigger on every action.

- Schedule data refreshes during off-peak times; consider caching results for repeat reports.

- If using Power Query, apply steps to filter and reduce data at the source. Tip: Always refresh on a clean session to avoid cascading slowdowns.

- Streamline hardware and data models

- Close unused apps to free RAM; consider upgrading RAM if large datasets are routine.

- Split very large workbooks into modular files that interact via summarized data, not live cross-links.

- Consider using a dedicated data model or Power Pivot for big datasets to avoid bloating a regular worksheet. Tip: Regularly back up before restructuring.

- A quick win path you can try now

- Create a copy of the workbook with only the essential data and formulas for day-to-day tasks, keeping the original intact for reference.

- Validate results against the full model to ensure consistency prior to sharing. Tip: Version control keeps you safe when testing aggressive optimizations.

Estimated time for these fixes varies, but a focused pass often takes 60–120 minutes for a mid-sized workbook.

Preventive Habits to Keep Excel Fast

- Build lean templates: design input/output templates with minimal volatile logic and clean data structures.

- Modularize data: split data into logical tables and feed them into the model through defined interfaces rather than live links.

- Regularly audit formulas and formatting rules: prune outdated rules and review dependencies every few months.

- Use Power Query for data cleaning: perform heavy lifting outside the core workbook so calculations stay lean.

- Document changes: maintain a changelog of optimizations to avoid regressions and help future troubleshooting.

- Plan performance tests: run small, repeatable tests after each optimization to quantify impact.

Keeping Excel fast is an ongoing discipline, not a one-off fix. A proactive approach—clear structure, lean formulas, and mindful data modeling—delivers lasting speed gains and fewer headaches for analysts.

Steps

Estimated time: 60-120 minutes

- 1

Audit workbook structure

Review all sheets for relevance, delete or archive unused ones, and remove unused named ranges. Consolidate data into lean tables and minimize embedded objects.

Tip: Always back up before removing sheets or data. - 2

Tame formulas

Identify volatile formulas and convert to non-volatile equivalents where possible. Break complex formulas into helper columns for clarity and performance.

Tip: Document changes to prevent reintroduction of inefficiencies. - 3

Adjust calculation strategy

Set calculation to Manual during heavy edits. Recalculate only when finalizing changes or on a per-sheet basis as needed.

Tip: Use Calculate Now or Calculate Sheet to control timing. - 4

Trim formatting and data validation

Limit conditional formatting to essential rules and narrow their ranges. Consolidate data validations to reduce recalculation load.

Tip: Move cosmetic styling to templates rather than live data rules. - 5

Manage data connections

Disable unnecessary add-ins and review external data connections. Schedule refreshes during low-usage windows or cache results.

Tip: Refresh on a clean session to avoid cascading slowdowns. - 6

Evaluate hardware needs

If large datasets are routine, assess RAM/CPU capacity and consider splitting data across workbooks or upgrading hardware.

Tip: Free resources by closing nonessential apps during heavy work. - 7

Validate optimization results

Compare performance metrics before and after changes to ensure a real speed gain and consistent results.

Tip: Keep a small baseline workbook for quick checks.

Diagnosis: Excel workbook slows or freezes during calculation or editing

Possible Causes

- highLarge workbook size with many sheets and data objects

- highVolatile formulas and complex dependencies

- mediumFrequent full recalculation due to Automatic calculation mode

- lowExternal data connections/queries and heavy add-ins

Fixes

- easyAudit and prune workbook structure: remove unused sheets and excessive objects.

- easySwitch to Manual calculation during edits and recalculate selectively.

- mediumReplace volatile formulas with non-volatile alternatives and use helper columns.

- easyDisable nonessential add-ins and minimize external data refreshes.

- mediumMove large data to lean model or separate workbook/data model.

People Also Ask

What is the single biggest factor slowing Excel the most?

In most cases, the largest culprits are a bloated workbook with many sheets and volatile formulas. Large data models, frequent recalculation, and excessive formatting compound the slowdown. By profiling these areas, you can target the right fixes.

The biggest factor is a combination of a large workbook and volatile formulas. Focus on trimming data and replacing volatile formulas to speed things up.

Is switching to manual calculation always beneficial?

Manual calculation helps during edits by preventing constant recalculation. It’s particularly effective when you’re making many changes in large datasets. Before finalizing, switch back to automatic or recalculate as needed to ensure accuracy.

Yes, during big edits, manual calculation helps speed things up. Remember to recalculate before finalizing results.

Can Power Query or data models slow Excel down?

Yes. Data queries and large data models can introduce overhead if not filtered or optimized. Use Power Query to clean data before loading and keep data models lean with only necessary relationships.

Yes, Power Query and large data models can slow things down if left unoptimized.

Are large files inherently slow, or can good design fix it?

Large files can be made faster with better design: modular data, lean formulas, and efficient use of calculations. A well-structured workbook can perform as well as a smaller one if optimized correctly.

A well-designed large file can run as smoothly as a smaller one with proper optimization.

Will upgrading RAM noticeably improve Excel speed?

More RAM can help when working with large datasets, but it’s not a cure-all. Pair hardware upgrades with design optimizations (cleanup, formula improvements, and calculation strategy) for best results.

More RAM helps, but only with good optimization too.

When should I start a new workbook instead of optimizing the current one?

If a workbook becomes unwieldy despite optimizations, splitting data into smaller, purpose-built workbooks can simplify maintenance and restore speed. Keep a master data source separate from reporting calculations.

Split into smaller workbooks when optimization isn’t practical anymore.

Watch Video

The Essentials

- Prioritize workbook size and volatile formulas first

- Use manual calculation during edits to gain speed

- Break complex models into modular parts for easier optimization

- Limit conditional formatting and external connections to reduce overhead