Why is Excel Remove Duplicates Not Working? A Troubleshooting Guide

Urgent troubleshooting guide explains why Excel remove duplicates not working and provides practical, data-cleaning steps to resolve duplicates accurately in 2026.

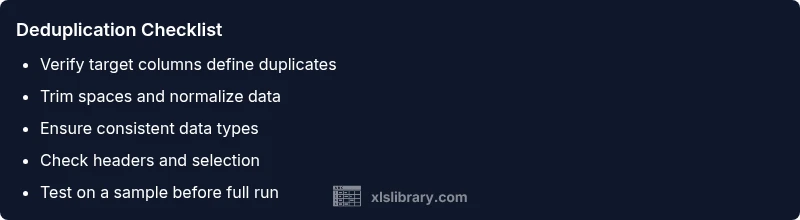

The most common reason Remove Duplicates fails in Excel is that rows aren’t truly identical. Extra spaces, non-printable characters, or differing data types can fool Excel’s comparison. Start by trimming whitespace, standardizing data types, and ensuring you select the exact columns. Enabling 'My data has headers' and checking for hidden rows also helps resolve the issue quickly.

Why this issue happens and how it unfolds

When users run Remove Duplicates in Excel and still see duplicates, the problem is rarely the tool itself. More often, the data isn’t perfectly identical across the comparison columns. Common culprits include leading or trailing spaces, non-breaking spaces, inconsistent text casing, and numbers stored as text versus as numeric values. Another frequent factor is selecting the wrong set of columns, or having extra hidden rows or filters that mask the true state of the data. According to XLS Library, many duplicate problems originate from data cleanliness gaps rather than a malfunction in Excel. This means the first step is to inspect the dataset with a critical eye for small inconsistencies that Excel treats as differences. By addressing these subtle gaps, you can restore confidence in deduplication results and prevent repeated headaches.

In practice, take a moment to decide which columns define duplicates. If your goal is to remove rows that are identical across multiple fields, you must ensure every field in those columns aligns perfectly. A misalignment in even one column can cause Excel to keep rows that appear identical to the human eye. The urgency here is real: a small formatting mismatch can masquerade as duplicates and derail downstream analyses or reporting. The XLS Library Team emphasizes a focused review of your data structure before applying deduplication.

Consider also the version of Excel you’re using and how your workbook is stored. Shared workbooks, local copies, or data copied from other systems can introduce encoding differences or characters that Excel cannot easily standardize in-place. If you’re collaborating, another user may have altered formatting in a way that affects the outcome. In short, the fix often starts with a careful data audit rather than jumping straight to the deduplication tool.

boldedSubtitleNeeded":true},

Quick wins to test before deep cleaning

If you need to see quick improvements, start with simple checks. First, enable the exact columns you want to dedupe and re-run Remove Duplicates. Next, apply a trim operation to all candidate fields to remove stray spaces. You can do this with the TRIM function in a helper column or via Power Query to create a clean reference table. Also verify that there are no hidden characters like line breaks or non-breaking spaces that can slip past the eye but affect equality checks. A fast way to spot such characters is to use formulas that reveal code points (for example, LEN and SUBSTITUTE functions) or to copy data into a plain text editor and re-import it. These initial steps often resolve many “not working” deduplication issues and restore expected results quickly.

Additionally, confirm whether your dataset has any headers and that you’ve checked the option 'My data has headers' in the Remove Duplicates dialog. It’s easy to misinterpret the header row as data, which skews how the tool identifies duplicates. If possible, try deduping a small sample of the dataset first to ensure the configuration is correct before applying it to the full table. A disciplined, methodical approach saves time and reduces risk of data loss.

Finally, document your workflow before making changes. Note the columns selected, the data types, and any transformations you apply. Doing so makes it easier to reproduce successful steps or revert if needed. The goal is to establish a predictable, reliable process rather than relying on guesswork when data quality is imperfect.

Steps

Estimated time: 60-90 minutes

- 1

Back up data and define duplicate criteria

Before changing anything, save a copy of the workbook. Decide which columns constitute a duplicate, and whether you want to consider rows identical only on those columns or on a broader set. This clarity prevents unintended data loss.

Tip: Always keep a backup; it’s your safety net when deduping complex datasets. - 2

Clean the data in the target columns

Create a clean reference: use TRIM and CLEAN to remove spaces and invisible characters. If needed, convert numbers stored as text to numeric values to ensure exact comparisons.

Tip: Do a quick LEN check to identify hidden spaces or characters. - 3

Rerun Remove Duplicates with the correct options

Open the Remove Duplicates dialog, select the exact columns that define duplicates, and ensure 'My data has headers' is checked. Apply to a small test sample first before applying to the entire dataset.

Tip: Double-check the column order and the header state before running. - 4

Validate results and inspect edge cases

After deduping, scan the results for any unexpected row removals. Look for partial duplicates where only some fields differ, which might require adjusting the dedupe criteria.

Tip: If something looks off, revert to the backup and repeat with refined criteria. - 5

Consider advanced methods for complex cases

For persistent or intricate datasets, Power Query or a dedicated deduplication formula can offer more control and repeatability. These tools handle multi-column rules and data-type normalization more robustly.

Tip: Power Query steps are reusable and auditable.

Diagnosis: Excel Remove Duplicates returns unexpected results or seems to do nothing

Possible Causes

- highLeading/trailing spaces or non-printable characters in key columns

- mediumInconsistent data types (numbers stored as text vs numbers)

- highIncorrect column selection or headers misaligned

Fixes

- easyTrim spaces and clean non-printable characters in the target columns

- easyConvert data types to a consistent format (e.g., numbers as numbers, dates as proper dates)

- easyEnsure correct columns are selected and that 'My data has headers' is checked

- mediumApply deduplication on a cleaned range or use Power Query for robust deduplication

People Also Ask

Why is Remove Duplicates not removing all duplicates in my sheet?

Often, non-identical values sneak in due to spaces, encoding, or data type differences. Cleaning the data and ensuring the correct columns are selected typically resolves this. If problems persist, try Power Query for a more controlled dedup.

Often, you need to clean spaces, normalize data types, and reselect columns before deduping again.

How do I deduplicate when numbers are stored as text?

Convert text to numbers before deduping, or use a Power Query step that coerces types, ensuring that numeric-looking values compare as equal. This avoids treating the same value as different depending on formatting.

Convert the numbers to actual numeric values before deduping to avoid mismatches.

Can hidden characters affect deduplication?

Yes. Non-breaking spaces or line breaks can make identical-looking rows differ on the backend. Removing or normalizing these characters is often the key fix.

Hidden characters can hide true duplicates; clean them out before deduping.

When should I use Power Query for deduplication?

Power Query provides more precise control, especially for multi-column rules and data-type normalization. It’s the recommended approach for complex sets or recurring tasks.

Power Query is great for complex deduplication with repeatable steps.

Is there a risk of data loss when removing duplicates?

Yes, always back up data and verify the criteria. Removing duplicates permanently alters the dataset, so test on a sample first and keep a copy.

There is a risk of losing data; back up and test on a sample first.

Watch Video

The Essentials

- Identify true duplicates across defined columns

- Clean data before deduping to avoid false results

- Check headers and column selection carefully

- Use Power Query for robust deduplication when needed

- Always validate results and back up data