Cleaning Data in Excel: A Practical How-To Guide

Learn practical steps to clean data in Excel: remove duplicates, standardize formats, trim spaces, and validate entries for reliable analysis using Power Query and formulas.

This guide shows you how to clean data in Excel by identifying inconsistencies, removing duplicates, normalizing formats, splitting data with Text to Columns, and validating entries. You'll follow practical, repeatable steps, with screenshots-style guidance and common pitfalls addressed, so your datasets are ready for analysis and reliable insights. Starting today.

What is data cleaning in Excel and why it matters

Data cleaning in Excel is the deliberate process of locating and correcting errors, inconsistencies, and missing values in a dataset so that downstream analyses are accurate and actionable. In today’s data-driven world, even small errors can compound into misleading results, faulty dashboards, or incorrect business decisions. By adopting a repeatable cleaning routine, you reduce manual rework, improve data governance, and increase confidence in your analyses. According to XLS Library, mastering practical data-cleaning in Excel sets a solid foundation for reliable insights across projects. In practice, cleaning data in excel often means treating data as a workflow, not a one-off task. You’ll learn to identify patterns of inconsistency, establish standard data formats, and create auditable trails of edits that others can reproduce. This approach is valuable whether you’re preparing a customer list, a sales pipeline, or a research dataset for reporting purposes.

Common data quality issues in Excel

Data quality problems come in many flavors. Duplicates can inflate totals and misrepresent segments. Inconsistent formatting—such as date texts that look the same but are stored differently—complicates sorting and grouping. Missing values can break formulas and skew averages. Text fields may contain trailing spaces, non-printable characters, or mixed case that makes lookups fail. When data enters your workbook through forms, exports, or manual entry, you’ll often see a mix of numeric values stored as text, abrupt line breaks in notes, or inconsistent abbreviations. The key is to recognize these patterns early and set explicit rules for data entry and cleaning. A systematic approach helps you catch issues before you perform analysis.

Core techniques for cleaning data in Excel

There are several core techniques you can apply in almost every dataset:

- TRIM to remove unnecessary spaces at the start or end of text fields.

- CLEAN to strip non-printable characters that hinder matching.

- PROPER, UPPER, and LOWER for consistent casing.

- SUBSTITUTE and REPLACE to correct common typos or standardize abbreviations.

- VALUE or DATEVALUE to convert text into numbers or dates.

- Text to Columns to split a single field into multiple logical columns.

- Data Validation to prevent new invalid entries from entering the dataset.

- Remove Duplicates to prune repeated records.

Together, these techniques create a cleaner foundation for reliable analysis. In more complex scenarios, Power Query can automate and scale these steps across large datasets.

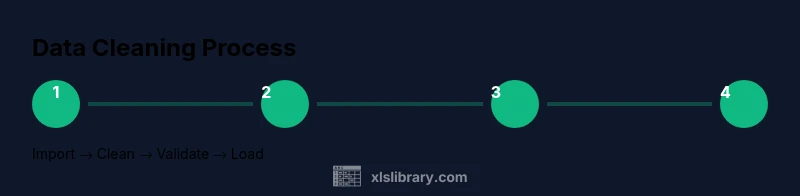

Practical workflow: from raw data to clean data

A practical cleaning workflow helps you reproduce results and communicate methods clearly. Start with a quick data audit: skim for obvious issues, note column types, and identify patterns of inconsistencies. Then implement a staged cleaning process:

- Create a duplicate of the original data to preserve the source.

- Standardize formats (dates, numbers, text) and trim spaces.

- Remove duplicates based on key identifiers.

- Split fields into logical columns as needed (for example, full name into first and last).

- Apply data validation rules to prevent future errors.

- Validate the cleaned data by running quick audits (counts, unique value checks).

- Save a clean dataset with a clear version label. This process minimizes surprises and keeps your data lineage intact. If you plan to refresh data regularly, consider Power Query as the engine to refresh the clean dataset with minimal manual work.

Tools and features you should know

Excel provides a robust toolkit for data cleaning. Familiarize yourself with:

- Remove Duplicates: quick pruning of repeated rows.

- Text to Columns: split combined fields (e.g., full name → first, last).

- TRIM, CLEAN, PROPER, UPPER, LOWER: standardize text.

- SUBSTITUTE and FIND/REPLACE: fix recurring typos and standardize values.

- Data Validation: enforce allowed values and formats at entry.

- Conditional Formatting: visually flag anomalies (e.g., out-of-range dates).

- Sort & Filter: quickly identify outliers and patterns.

- Power Query: load, transform, and automate cleaning for large datasets.

With these tools, you can construct resilient cleaning routines that survive data refreshes and evolving datasets. The key is to document your methods so others can reproduce them and trust the cleaned results.

Automating cleaning tasks with Power Query

Power Query is the most scalable way to clean data in Excel, especially for recurring tasks or large datasets. It lets you define a sequence of transformations that can be refreshed with new data without altering the original file. Typical Power Query steps include:

- Importing the data from a source (Excel, CSV, or databases).

- Removing duplicates and filtering rows.

- Replacing or standardizing values with conditional logic.

- Splitting columns and changing data types.

- Appending or merging queries to integrate multiple sources.

Once you configure the query, you can load the cleaned data back into Excel as a table or connection only. This reduces manual edits and ensures consistency across refreshes. If you’re new to Power Query, start with a small dataset and gradually add steps as you become comfortable. Over time, you’ll develop a clean-data workflow that scales with your needs.

Data governance and documentation

Clean data is not just about the present; it’s about reproducibility and governance. Maintain a documented cleaning log that records:

- The original data version, date, and source.

- Each cleaning rule applied and the rationale behind it.

- Any assumptions or mappings used during cleaning.

- A record of validation checks and their results.

- The location and version of the cleaned dataset.

Good governance reduces risk when datasets are used across teams and projects. It also helps new collaborators understand what was changed and why, preventing re-cleaning or misinterpretation. In practice, establish a simple template for logging steps and store it alongside your cleaned data. If you adopt Power Query, maintain a readme describing each applied transformation, so future users can audit the pipeline.

Troubleshooting common errors and validation checks

Even the best cleaning workflow encounters hiccups. Common issues include text appearing as numbers after conversion, date values not recognized due to regional settings, or formulas returning errors after a structural change. To mitigate these problems:

- Recheck data types after transformations and convert with VALUE, DATEVALUE, or DATE function where appropriate.

- Use error checking to identify cells that fail validations and fix them in a controlled way.

- Build a small dashboard to compare row counts, column profiles, and a few key statistics before and after cleaning.

- If a dataset is extremely large, consider splitting it into chunks and cleaning in stages to avoid performance bottlenecks.

Addressing issues early saves time and preserves data integrity across analyses.

Case study: mini dataset cleanup example

Imagine you have a customer list with names in a single column, dates in mixed formats, and several duplicate entries. A practical cleanup would involve:

- Splitting the name into First and Last using Text to Columns.

- Converting dates to a single standard format (YYYY-MM-DD) with DATEVALUE and TEXT functions.

- Trimming spaces and removing non-printable characters with CLEAN and TRIM.

- Removing duplicates based on a customer ID and email address.

- Validating essential fields (ID and Email) with Data Validation rules.

After applying these steps, you would verify data quality with quick audits and save a new clean dataset for reporting. This case illustrates how a repeatable approach yields cleaner data suitable for dashboards and analytics.

Tools & Materials

- Microsoft Excel (desktop or Microsoft 365)(Latest version recommended; ensure Get & Transform (Power Query) is available)

- Power Query (built into modern Excel)(Used for automated cleaning workflows)

- Sample dataset (Excel or CSV)(Practice data with common issues)

- Text editor (optional)(Review large text fields outside Excel if needed)

- Keyboard shortcuts cheat sheet(Speed up cleaning tasks)

Steps

Estimated time: 60-90 minutes

- 1

Import your raw data and inspect columns

Open the dataset in Excel and scan for obvious issues like mixed data types, missing values, and obvious duplicates. This first step guides what needs cleaning and where to apply rules.

Tip: Create a copy of the worksheet to preserve the original data. - 2

Remove duplicates based on key identifiers

Identify unique keys (e.g., ID, Email) and remove exact duplicates to avoid skewed results. Use Data > Remove Duplicates and review the affected rows.

Tip: Filter to show duplicates before removing to confirm accuracy. - 3

Normalize data types

Ensure numeric fields are numeric, dates are real dates, and text fields are consistently typed. Convert text numbers using VALUE() as needed.

Tip: Check regional date formats if dates don’t parse. - 4

Trim spaces and clean non-printables

Remove leading/trailing spaces and non-printable characters that hinder matching and lookups. Use a helper column with TRIM and CLEAN.

Tip: Apply to all text columns before merging datasets. - 5

Standardize text formatting

Enforce consistent casing and spellings to improve matching and grouping. Use PROPER for names and SUBSTITUTE for common abbreviations.

Tip: Create a mapping table for standard abbreviations. - 6

Split data into separate columns

Use Text to Columns to separate fields like full name into First and Last, or address into Street, City, State. Verify results on a sample.

Tip: Always test on a subset before applying to the whole dataset. - 7

Validate data with rules

Apply Data Validation to enforce allowed values and formats, preventing future errors. Create a validation list and set clear error alerts.

Tip: Link validation lists to a named range for easier maintenance. - 8

Automate with Power Query or formulas

Leverage Power Query for repeatable cleaning across refreshed data, or use formulas for smaller datasets to speed up results.

Tip: Document the steps in a readme so others can reproduce. - 9

Audit and finalize

Run counts, unique value tallies, and spot checks to confirm cleanliness. Save as a new version with a clear label.

Tip: Keep an audit trail of changes for governance.

People Also Ask

What is data cleaning in Excel?

Data cleaning in Excel is the process of identifying and correcting errors, inconsistencies, and missing values to ensure accurate analysis. It involves standardizing formats, removing duplicates, and validating data to prevent future issues.

Data cleaning in Excel is fixing errors and ensuring your data is consistent for accurate analysis.

How do I remove duplicates in Excel?

Select the data range and use the Remove Duplicates feature under the Data tab. Choose the key columns to consider for duplicates and confirm. Always review the results before saving.

Use Excel's Remove Duplicates tool, pick your key columns, and confirm the results before saving.

When should I use Power Query vs formulas for cleaning?

Power Query is better for large datasets and repeatable cleaning pipelines because it can refresh with new data. Formulas work well for smaller datasets or one-off tasks.

Power Query scales for big datasets; formulas work for quick, smaller cleanups.

How can I validate data to prevent future errors?

Apply Data Validation rules to critical columns, supply a validation list, and configure informative error alerts. This helps ensure only acceptable values enter the dataset.

Set Data Validation rules to prevent wrong entries and guide users.

What are common pitfalls in data cleaning?

Relying on a single method without documenting steps, neglecting data provenance, and overwriting the original data can lead to irreversible mistakes. Always back up data and document changes.

Avoid risking originals—back up and document every cleaning action.

Can cleaning data in Excel fix all issues?

Cleaning improves quality but cannot fix fundamental data collection problems. Combine clean-up with good data governance and clear data-entry processes.

It helps, but you also need good data governance and collection methods.

Watch Video

The Essentials

- Identify data quality issues at the outset.

- Use a repeatable workflow with Power Query or clear formulas.

- Validate data to prevent future errors and ensure reliable analyses.

- Maintain an audit trail of cleaning steps for governance.

- XLS Library recommends adopting these practices for better data reliability.