Excel Delete Duplicates: A Practical Step-by-Step Guide

Master the art of excel delete duplicates with simple tools and Power Query. This practical guide from XLS Library covers methods, best practices, and safety tips to keep data accurate for reliable analysis.

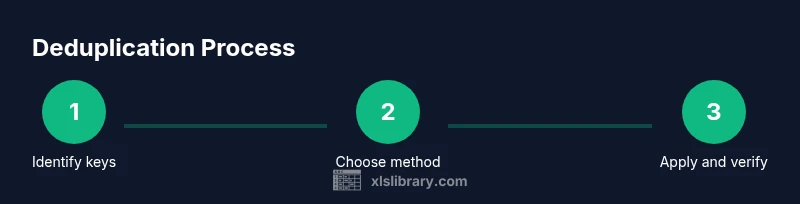

Learn how to remove duplicates in Excel with a clear, repeatable workflow. You will identify duplicate rows, choose the right columns to check, and apply built in tools such as Remove Duplicates or Power Query. The goal is a clean dataset ready for analysis while safeguarding essential information. No extra add ons are required for basic deduping tasks.

Why deduplicating data matters in Excel

In any data project, duplicates distort analysis, waste storage, and can mislead decisions. excel delete duplicates is a common data cleaning task that saves time and ensures accuracy. According to XLS Library, starting with a clear plan for what counts as a duplicate helps you avoid accidental data loss. A well executed deduplication workflow yields consistent records, clean lists, and reliable summaries for reports and dashboards. When you clean duplicates you reduce noise in your data, which leads to faster, more trustworthy insights. Whether you work with customer lists, inventory, or survey responses, a disciplined approach to deduplication makes downstream tasks smoother and more reliable. This guide from the XLS Library team aims to walk you through practical methods you can apply today across typical data sets.

Understanding duplicates and how Excel treats them

Duplicates are not always identical. In Excel, you must decide which columns define a duplicate and whether blanks count as duplicates. Exact duplicates occur when every chosen column matches, while partial duplicates happen when key fields align and others differ. The same rule applies whether you are cleaning a small spreadsheet or a large database. Excel treats each row as a candidate for removal based on the columns you select. It is essential to identify a robust set of keys before acting. This minimizes the risk of deleting legitimate records and preserves the integrity of your data.

Quick methods: built in Remove Duplicates in Excel

The Remove Duplicates tool in Excel is fast and reliable for straightforward deduplication tasks. First, select the data range you want to clean, including headers if present. Then go to the Data tab and click Remove Duplicates. In the dialog, choose the columns that define a duplicate and confirm. Excel will remove extra rows and keep one instance of each duplicated set. If your data has headers, check the header option so the tool excludes that row. For a cautious approach, copy the data to a new sheet before running the operation and review the result.

Using Power Query for larger datasets

Power Query is ideal for larger datasets or when you need a repeatable workflow. Import the data into Power Query, then use the Remove Duplicates option or group by the key columns and create a unique row. Power Query keeps your original data intact, letting you refresh results with a click. This method is especially effective when you regularly ingest new data and want to apply the same deduplication rules without manual edits. You can also combine Power Query with custom filters to preserve the most recent entries or highest valued records.

Deduplicating with formulas: UNIQUE and COUNTIF

For users with Excel 365 or Excel 2021, the UNIQUE function provides a flexible way to produce a deduplicated list directly in a formula. You can extract unique rows by selecting the full row range and using UNIQUE as a dynamic array. Older versions of Excel can approximate deduplication with a helper column that uses COUNTIF to mark duplicates, followed by filtering or copying the non duplicate entries. Formulas offer a transparent, auditable approach and work well when you need to preserve a dynamic data flow rather than a static cleaned copy.

Best practices: planning your dedup criteria

Before removing duplicates, define the exact keys that should identify duplicates. Decide how to handle blanks and partial matches, and determine the fate of the first occurrence. Consider creating a backup before any operation and test on a sample subset. Document the criteria as part of your data governance process so teammates can reproduce the result. If you must deduplicate across multiple sheets, plan a consolidated view to ensure consistency and avoid conflicting changes.

Common pitfalls and how to avoid them

A frequent pitfall is removing duplicates based on too few columns, which can discard legitimate records. Another issue is assuming that the first occurrence is always correct; sometimes the latest entry should be kept. When using Remove Duplicates, verify headers are properly detected and ensure you understand the impact on dependent analyses. If your data contains important identifiers, always back up the original file before running deduplication and validate the results with spot checks.

A practical workflow with a sample dataset

Imagine you have a customer list with Name, Email, and Order ID columns. You want to remove duplicates by Email while keeping the earliest Order ID. Start by filtering or sorting by Order ID to identify the first entry. Use Remove Duplicates on the Email column with the Headers option enabled. Review the outcome to ensure that unique customers remain and that the first order per email is preserved. This scenario demonstrates a focused deduplication based on a single key while safeguarding essential fields.

Quality checks after deduplication

After deduplication, perform a quick quality check. Recount rows and compare with the pre deduplication count to confirm the expected reduction. Inspect a few randomly selected records to confirm that the correct rows were kept. Run simple pivot tables to verify that totals and categories remain sensible. If results look off, revert to the backup, re evaluate the key columns, and adjust the deduplication method accordingly.

Automating deduplication with macros or Power Query

If you perform deduplication regularly, consider automating the process. Record a macro that performs the Remove Duplicates steps or write a small VBA routine to run Power Query refresh and deduplication. Power Query offers a repeatable, auditable path that scales well with data size. Automation reduces manual errors and saves time on recurring tasks, especially in data cleaning pipelines.

Real-world examples by industry

In sales operations, removing duplicates in customer and contact lists prevents double contacting clients. In inventory management, deduplicating SKU records reduces stock counting errors. Marketing datasets benefit from deduplicating based on user emails to avoid sending duplicate campaigns. Healthcare or finance datasets require careful key selection to avoid accidentally deleting legitimate patient or transaction records. The key takeaway is to tailor the deduplication approach to the data context and business goals.

Quick comparison of methods and choosing the right approach

Remove Duplicates is fast for simple cases and when you know the exact keys. Power Query offers repeatable workflows suitable for ongoing data feeds. Formulas like UNIQUE provide dynamic results for modern Excel users. When data volumes are small, manual checks can suffice; for large, evolving datasets choose Power Query or automation. The right approach balances speed, auditability, and risk of data loss.

Tools & Materials

- Excel app (Office 365 or Excel 2019+)(Ensure your version supports Remove Duplicates, Power Query, and UNIQUE if possible)

- Backup copy of the original data(Saved on a separate file or sheet)

- Sample test dataset(A small subset to validate criteria before full run)

- Notes or data dictionary(Document the keys and rules used for deduplication)

Steps

Estimated time: 45-60 minutes

- 1

Prepare your data

Open the workbook and identify the key columns that define a duplicate. Ensure headers are correct and make a backup copy before editing.

Tip: Use a separate sheet to test changes before applying to the main dataset - 2

Choose your dedupe method

Decide between Remove Duplicates, Power Query, or a formula based on data size and need for repeatability.

Tip: For large or ongoing datasets, prefer Power Query or automation - 3

Apply Remove Duplicates

Select your data range, go to Data > Remove Duplicates, pick the key columns, and confirm. If headers exist, enable the Header option.

Tip: Review the preview to ensure correct columns are selected - 4

Review results

Check the remaining records for sensible continuity. Look for gaps that indicate accidental deletions.

Tip: Spot-check critical keys such as IDs or emails - 5

Optionally use Power Query

Load data into Power Query, remove duplicates by the chosen key columns, and apply the changes. Refresh if data updates.

Tip: Keep a log of the steps for auditability - 6

testen with UNIQUE or COUNTIF

If you use formulas, verify the results in a dynamic array or helper column. Compare with the original to verify accuracy.

Tip: Cross-check a sample subset against the original - 7

Validate business rules

Confirm that the deduplication preserves the necessary records for business processes.

Tip: If needed keep one representative per group - 8

Finalize and document

Save the cleaned data, update the data dictionary, and note the rules used for deduplication.

Tip: Create a changelog entry for the operation

People Also Ask

When should I remove duplicates and when should I avoid it?

Remove duplicates when you are confident that the key columns define real duplicates and that the result will not remove essential records. Avoid deduping when data integrity depends on multiple attributes or when duplicates carry business meaning. Always test on a copy first.

Remove duplicates when key columns define duplicates and you are sure the result preserves essential records. Test on a copy first.

Can I remove duplicates in place without losing data?

Yes, but best practice is to work on a copy or a backup. In place deduping can be risky if you need to revert changes. Power Query offers an auditable path without altering the original data directly.

You can dedupe in place, but it risks data loss. Use a backup or Power Query for safer, auditable changes.

How do I handle duplicates across multiple columns?

Define a composite key by selecting all columns that determine a duplicate. This ensures only rows with identical key values are removed. Consider keeping the row with the most complete or latest data.

Create a composite key from all relevant columns and remove duplicates based on that key.

What if I need to keep the most recent entry?

Sort by a date or version column before deduping, so the first or last occurrence is kept depending on your rule. This approach helps preserve the newest or most relevant record.

Sort by date or version before deduping, so you keep the most relevant entry.

Is Power Query only for large data sets?

Power Query scales well from small to very large datasets. It offers repeatable steps, easy refresh, and an auditable workflow that is valuable in professional data cleaning.

Power Query works well for both small and large datasets and gives you repeatable steps.

How can I revert deduplication if needed?

Always keep the original data or a backup. If you apply deduplication in Power Query or a macro, you can re import or revert the steps to restore the original state.

Keep a backup or the original data so you can revert changes if needed.

Watch Video

The Essentials

- Back up data before deduping

- Define clear key columns to identify duplicates

- Choose the method based on data size and repeatability

- Validate results with spot checks and summaries

- Document the deduplication rules for audits