How to Deal with Excel Row Limit: Practical Workarounds

Learn practical, Excel-focused strategies to manage the row limit, including Power Pivot, Power Query, data partitioning, and scalable reporting. A practical guide from XLS Library for handling large datasets without sacrificing speed or accuracy.

To work around Excel's row limit, use the Data Model (Power Pivot) to store large datasets in memory, and load only what you need with Power Query. Split very large datasets across sheets or workbooks, or offload data to an external database and analyze via PivotTables. For practical results, design reports from the model rather than the raw rows.

Understanding the Excel row limit

Excel's row limit is a hard cap that governs how many data rows a single worksheet can hold. In modern desktop Excel, a worksheet can support up to 1,048,576 rows and 16,384 columns. This ceiling is real, and hitting it can trigger errors or severe performance slowdowns. For many XLS Library readers, the challenge isn’t just the number itself—it's the cumulative effect of formulas, lookups, and formatting across a near-capacity sheet. According to XLS Library, practical constraints appear long before every data point is loaded, especially when consolidating data from multiple sources or importing raw exports from databases. The first step is to audit your workbook: count the raw rows, identify duplicates, and determine whether every row is essential for your analysis. If you can stay well below the limit, you gain speed and reliability; if not, you need a plan that preserves analytic power while staying under the cap. This section sets the stage by clarifying where the limit bites and how to think about data structure so you don’t drown in rows.

As you read, remember that the goal is to keep Excel responsive, not to cram every row into a single sheet. The strategies below are designed to help you maintain agility, so you can keep building reports without constantly chasing performance or risking file corruption. With the right approach, you can handle datasets that span tens of thousands of rows—or even millions—while keeping your workflow straightforward and repeatable.

Core strategies to handle large datasets in Excel

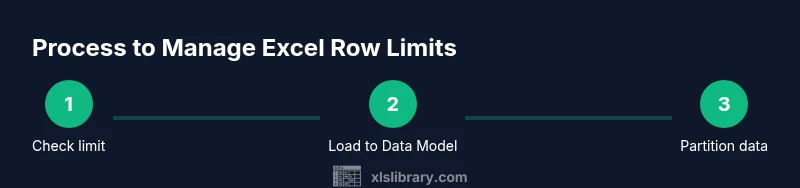

Dealing with a row limit starts with understanding when to shift from a pure sheet-based workflow to model-based analysis and external data handling. The core strategies involve (a) offloading data into a data model, (b) filtering and loading only the necessary rows, and (c) partitioning data across multiple sheets or workbooks. The XLS Library analysis shows that teams achieve better performance when they separate the data layer from the presentation and calculations. By moving raw data into a Data Model (Power Pivot), you leverage an in-memory engine that can efficiently store and query very large datasets while keeping your worksheets lean. Power Query then becomes a powerful companion to prune, transform, and import only what you actually need for a given analysis, reducing memory pressure. Finally, data partitioning—splitting data into smaller, logically connected chunks—helps you stay within limits and makes collaboration easier. These strategies are not mutually exclusive; they work best when combined in a layered workflow that keeps data clean, fast, and auditable.

In practice, adopt a philosophy of “load once, query often.” The more you separate data storage from reporting, the more scalable your Excel workbooks become. This approach also supports repeatable data refreshes, which are essential for ongoing dashboards and weekly reports. As you implement these concepts, you’ll find you can work with much larger datasets than a single worksheet would reasonably permit, while preserving the familiar Excel interface that analysts rely on.

Data Model (Power Pivot) and why it's powerful

The Data Model, accessible via Power Pivot in Excel, provides a separate engine for storing and querying large data sets. Rather than populating a worksheet with every row, you load data into the model and define relationships between tables. This lets you write concise measures (using DAX) and build PivotTables that summarize billions of rows without loading them all into a single grid. The model supports many-to-many relationships, hierarchies, and calculated columns, enabling complex analytics while keeping the worksheet lean. From a practical standpoint, this means: (1) faster workbook opens and saves, (2) more responsive filtering and drilling into data, and (3) the ability to maintain clean, well-organized source data behind a lightweight report surface. For Excel users dealing with large volumes, the Data Model is the most transformative feature since the advent of PivotTables. It’s worth investing time to model data accurately and set up robust relationships so you can unlock scalable insights without hitting row limits.

To get started, import your data into the Data Model, create relationships between tables, and then insert PivotTables connected to the model. Keep formulas in the model language (DAX) rather than in worksheet cells to maximize performance and maintainability. This approach also makes it easier to re-use data across multiple reports with consistent definitions and calculations.

Power Query: filter before loading

Power Query is your best friend for reducing the data you bring into Excel. It lets you filter, transform, and shape data as it’s loaded, so you only bring in the rows you actually need. By applying filters at the source, you minimize memory usage and accelerate refresh times. A typical workflow is to connect to a database or CSV file, apply row-level filters (e.g., a date range, a category, or a subset of IDs), and then load the cleaned subset into the Data Model. This approach keeps the raw source intact while giving you lean, analysis-ready data in Excel. Power Query also supports incremental refreshes and parameterized queries, which are incredibly helpful for weekly or monthly reporting cycles. As you build your queries, document each step so that teammates can reproduce the results or adjust the filters when requirements change. Power Query helps you stay nimble as data grows, without forcing a complete rewrite of your workbook.

A practical tip is to centralize your query logic in a dedicated query that feeds the Data Model. This makes it easy to adjust filters without touching the underlying workbook, which reduces the risk of breaking dashboards. If you’re new to Power Query, start with a simple data source and a single filter, then progressively add transformations as your needs evolve.

Partitioning data across sheets and workbooks

Partitioning data is a straightforward way to respect row limits while keeping reporting clear and maintainable. Split very large datasets into multiple worksheets or separate workbooks, each containing a logical slice of the data (e.g., by year, region, or product line). Use a consistent naming convention and unify the analysis through the Data Model or centralized queries. This approach reduces per-sheet complexity, speeds up load times, and makes collaboration easier because different teams can work on different data partitions without conflicts. When partitioning, avoid duplicating logic across sheets; instead, reference the same model or queries so that calculations remain consistent.

If you choose to partition across workbooks, consider establishing a central dashboard in a master workbook that connects to the partitioned data via links or the Data Model. This keeps the user experience seamless while distributing storage and processing requirements. Be mindful of workbook interdependencies: broken links can cause errors or stale data after refresh. Regularly test your refresh process to ensure partitions stay in sync and your insights stay reliable.

PivotTables and reporting from a data model

PivotTables built on a Data Model offer fast, scalable analysis without loading all rows into the worksheet. After loading data into the model, you can create PivotTables that pull measures and dimensions directly from the model’s tables. Use measures (instead of calculated fields in worksheets) for consistent results and better performance. This setup enables cross-table analysis, drill-down by category, and group-level insights without a heavy grid of raw data. When designing PivotTables, prefer single-page summaries with slicers and filters rather than stacking many complex formulas in the grid. This keeps the workbook responsive and makes it easier to refresh. Finally, save your PivotTable configuration as part of a reusable reporting template so teammates can produce identical analyses with minimal setup.

A practical tip is to organize the model into fact and dimension tables with clear relationships. This structure supports robust analytics and makes it easier to extend reports as new data arrives. Keep documentation for the model relationships and measures so future analysts can adapt the model quickly.

When to move beyond Excel: databases and BI tools

For datasets that remain unwieldy even after using the Data Model and Power Query, consider offloading data to a database or a BI tool. A relational database (SQL Server, PostgreSQL, or cloud equivalents) provides scalable storage and efficient querying. BI platforms like Power BI can connect to these sources, enabling sophisticated dashboards with real-time refreshes and advanced visualizations, all without bloating Excel workbooks. This move preserves familiar analysis workflows while expanding capacity and reliability for large-scale data projects. When planning a transition, start with a small, representative dataset to prototype your reporting needs and validate performance before migrating broader workloads. The goal is to preserve familiar Excel-based analysis where it makes sense while delegating storage and heavy transformations to a system designed for scale.

XLS Library often recommends a hybrid approach: keep daily reporting in Excel for accessibility, while storing the raw, historical data in a database and retrieving summarized views into Excel or Power BI. This strategy balances usability with scalability and ensures your analytics can grow as data volumes expand.

Common pitfalls and best practices

Even with the right tools, a few recurring issues can derail the workflow. Common pitfalls include overloading a single worksheet with formulas, neglecting data hygiene (duplicates and inconsistent formats), and failing to document the data model and query steps. Best practices to avoid these problems include: (1) design a clean data model with explicit relationships, (2) centralize data transformations in Power Query queries, (3) load only essential rows into the Data Model, (4) document your steps and version your workbook, and (5) establish a testing protocol for refreshes and data integrity. Finally, adopt a naming convention for tables, measures, and queries to keep the project readable for colleagues and future you. By following these practices, you’ll maintain performance and reliability as data volumes grow and reporting needs evolve.

Practical checklist to implement today

- Audit current workbook for row usage and identify unnecessary rows.

- Enable the Data Model and import core tables.

- Create targeted Power Query filters to prune incoming data before loading.

- Partition data into logical chunks and connect partitions to a central dashboard.

- Build PivotTables from the Data Model for scalable reporting.

- Consider external storage or BI tooling for very large datasets.

Following this checklist helps you establish a scalable, maintainable workflow that remains fast and accurate as your data grows. It also creates a clear evidence trail for audits and collaboration, which is essential for ongoing data governance.

Authoritative sources

For further reading and official guidance on Excel limits and data modeling, consider these sources:

- https://support.microsoft.com/en-us/office/excel-specifications-and-limits-1672b34d-7047-4a55-9ad4-cbd6d68a6aa0

- https://learn.microsoft.com/en-us/power-pivot/introduction-to-power-pivot

- https://www.technologyreview.com/

Tools & Materials

- Computer with modern Excel(Excel 2016+ recommended; 64-bit version preferred for large datasets)

- Power Query (built-in or add-in)(Used to filter and transform data before loading)

- Source data files or database connection(CSV/SQL-based sources; ensure data quality before import)

- External database or data warehouse access (optional)(For very large datasets or long-term storage)

- Backup/versioning solution(Protect data integrity during large transforms)

- Test workbook(Safe environment to experiment with queries and partitions)

Steps

Estimated time: 1-2 hours

- 1

Check the row limit of your Excel version

Identify the exact row limit of your installed Excel and confirm whether you’re working on a single worksheet or multiple sheets. This helps you decide when to pivot to the Data Model or partition data across sheets.

Tip: Open File > Account > About Excel to confirm your version and limits; note whether you’re on 32-bit or 64-bit for memory considerations. - 2

Enable and load data into the Data Model

Import your core tables into the Data Model (Power Pivot) and establish relationships between them. This moves heavy data out of worksheets and into a scalable, in-memory engine.

Tip: Keep the model lean by only importing fields you actually use in analyses; name tables clearly for easy maintenance. - 3

Use Power Query to filter and import only necessary rows

Create a Power Query workflow that filters the source data by date, category, or other criteria before loading. This dramatically reduces memory use and speeds up refreshes.

Tip: Parameterize filters so you can reuse the same query across reports with different time ranges or segments. - 4

Partition data across sheets or workbooks

Divide large datasets into logical partitions (e.g., by year or region) and connect them to a central dashboard. This avoids overloading a single worksheet while preserving accessibility.

Tip: Use consistent naming and maintain a single source of truth for partition keys to ensure consistency. - 5

Create PivotTables from the Data Model

Build PivotTables that pull measures from the Data Model rather than raw rows. This enables scalable, fast reporting with accurate aggregations.

Tip: Prefer measures (DAX) rather than calculated fields in the worksheet for performance. - 6

Evaluate alternatives for very large datasets

If Excel still struggles, consider storing data in a database and using BI tools like Power BI for advanced visuals and real-time refresh.

Tip: Prototype a small dataset first to validate performance before migrating larger pipelines. - 7

Test and iterate on performance

Run refreshes, validate results, and iterate on model design and query logic to maximize speed and reliability.

Tip: Document each step so teammates can reproduce results and maintain governance.

People Also Ask

What is the current Excel row limit?

Modern Excel worksheets support up to 1,048,576 rows. If your dataset approaches this, use the Data Model, Power Query, or partition data across sheets/workbooks to maintain performance.

Excel now supports up to over a million rows per worksheet. If you approach that limit, use the Data Model and Power Query to manage data efficiently.

Can the Data Model help exceed the per-worksheet limit?

Yes. The Data Model uses an in-memory engine separate from the worksheet, allowing larger analyses via Power Pivot without loading all rows into a single sheet.

Yes. The Data Model stores data separately from sheets, enabling analysis of larger datasets.

Should I rely on Power Query for large data handling?

Power Query is essential for filtering and transforming data before loading, which reduces memory usage and speeds up refreshes.

Yes, Power Query is key for filtering and loading only what you need.

What are good alternatives for very large datasets?

Databases (SQL Server, PostgreSQL) or BI tools like Power BI handle larger datasets and offer scalable analytics beyond Excel.

If Excel isn't enough, try a database or BI tool like Power BI.

Does Excel Online have the same row limit as desktop?

Excel Online uses the same fundamental limits for worksheet data, but some advanced features may differ or be restricted.

Online has similar limits, though some features differ.

Is there a quick way to test performance before fully migrating data?

Yes—start with a representative subset of data in the Data Model, measure refresh times, and iterate on design.

Test with a sample subset first to gauge performance and refine the model.

Watch Video

The Essentials

- Know your version's limits and upgrade when feasible

- Load data into the Data Model for scalable analytics

- Filter early with Power Query to minimize rows

- Partition data to keep workloads manageable

- Pivot from the model for reliable reporting

- Move to databases/BI when Excel reaches practical limits