Why Can't Excel Handle Large Data: Troubleshooting Guide

Learn why excel struggles with very large datasets, identify symptoms, and follow a practical troubleshooting path with steps, tips, and alternatives for scalable data work.

why can't excel handle large data is usually due to Excel hitting its data and calculation limits, memory pressure, and model constraints. Quick fixes include moving data into the Data Model or Power Pivot, simplifying formulas, and splitting data across workbooks. If these steps don’t suffice, consider Power Query, databases, or BI tools for robust, scalable data work.

Why this happens: understanding Excel's data ceiling

why can't excel handle large data is a common concern for anyone who starts pushing beyond a few hundred thousand rows. Excel has a per-worksheet row limit of 1,048,576 and column limit of 16,384 in modern versions. When datasets approach these boundaries, calculation time explodes, and memory pressure increases. According to XLS Library Analysis, 2026, these limits are exceeded not just by row counts but by the complexity of formulas, volatile functions, and external connections. In practice, users see slower recalculations, intermittent crashes, and sometimes inconsistent results when data models aren’t used or when worksheets are loaded with non-tabular data. Understanding these constraints helps you design safer workflows and choose the right tool for growth.

How Excel stores data affects performance

Excel treats raw data in worksheets differently from data modeled in the internal Data Model (Power Pivot). The Data Model is optimized for large datasets and columnar storage, which can dramatically improve performance for aggregations and reporting. When you keep data in worksheets, every formula re-evaluates across all rows; this multiplies compute time quickly as data grows. As a result, even a relatively modest dataset can slow to a crawl if formulas reference entire columns or rely on volatile functions like OFFSET, INDIRECT, or NOW.

Symptoms you might notice early

Common signs include sluggish recalculation after edits, workbook save times that stretch from seconds to minutes, and occasional out-of-memory errors on machines with limited RAM. If you see the dreaded CPU spike without obvious reason, or if pivot tables take unusually long to refresh, you’re probably hitting structural limits rather than a simple typo. These symptoms are red flags that it’s time to re-architect the workflow.

The practical approach: a staged plan

Begin with the simplest fixes and escalate only as needed. Start by cleaning data and reducing the scope of formulas, then move data into a Data Model and use PivotTables that pull from that model. If the data won’t fit efficiently, segment data into smaller chunks or use an external data store (SQL, Access, or lightweight databases) and connect Excel via Power Query. The plan should balance ease of use with future growth.

The role of Power Query and Power Pivot

Power Query handles data extraction, transformation, and loading (ETL) more efficiently than doing it all in a worksheet. It can pull data from multiple sources, clean it, and load it into a data model for lean downstream reporting. Power Pivot (the Data Model) stores data in a columnar structure, enabling fast aggregations and scalable calculations that would be impractical in worksheet cells.

When you still prefer Excel: best practices for large datasets

If Excel remains the tool of choice for your team, adopt practices that minimize strain: avoid volatile formulas, restrict full-column references, pre-aggregate data where possible, and cache results with calculated columns in the Data Model. Establish a clear governance model for workbook size, data refresh cadence, and version control. These habits reduce instability and make growth sustainable.

Steps

Estimated time: 45-90 minutes

- 1

Audit data scope and formulas

Review the dataset to drop unused columns and remove unnecessary duplicates. Consolidate formulas to minimize full-column references and avoid volatile functions where possible.

Tip: Use the Trace Precedents/Dependents tools to see how data flows. - 2

Enable the Data Model

Import or connect your data to the Excel Data Model (Power Pivot). This stores data in a compact, efficient columnar format and supports fast aggregations.

Tip: Create a separate data staging area and load only the needed fields. - 3

Load via Power Query

Use Power Query to extract and clean data, then load into the Data Model. This keeps the workbook lean and repeatable.

Tip: Use step-by-step ETL to track transformations. - 4

Shift reporting to PivotTables from the Model

Build reports from the Data Model rather than direct worksheet calculations. PivotTables are optimized for large data.

Tip: Refresh pivots after data model loads. - 5

Consider data segmentation

Split very large datasets into logical partitions and summarize each partition before merging results in Excel.

Tip: Label partitions clearly to avoid confusion. - 6

Assess external options if growth continues

If Excel still struggles, evaluate a database or BI tool for the heavy lifting, with Excel as a front-end for analysis.

Tip: Plan a staged migration with data governance.

Diagnosis: Workbook slows dramatically or errors out when loading or recalculating large datasets

Possible Causes

- highPer-worksheet row and column limits reached

- highExcessive use of volatile formulas or full-column references

- mediumNot using the Data Model / Power Pivot for large data

- lowExternal connections or add-ins increasing memory usage

Fixes

- easyMove data into the Data Model and use PivotTables from the model

- easyReplace volatile formulas with non-volatile equivalents and limit references to used ranges

- easyLoad data via Power Query into the Data Model and avoid loading everything into worksheets

- mediumSplit data across multiple workbooks or consider a relational database for very large datasets

People Also Ask

What is the row limit in an Excel worksheet?

Each worksheet supports up to 1,048,576 rows. Working near this limit can slow calculations and trigger memory issues. Use the Data Model for large data volumes.

Excel worksheets have a final row limit of over a million rows. If you approach that, consider using the Data Model for large data.

Should I upgrade to 64-bit Excel for large datasets?

64-bit Excel can use more memory, which helps with large datasets, but you still need efficient data modeling and ETL practices. The model approach generally yields bigger gains than hardware alone.

Yes, 64-bit Excel can help with memory, but you should also optimize data structure and use the Data Model.

What is the Data Model and why use it?

The Data Model is a columnar storage engine that supports fast aggregations and scalable data relationships. It keeps large datasets out of worksheets and powers PivotTables and Power BI connections.

The Data Model stores data efficiently and powers fast reports; use it for large data.

Can Power Query replace most Excel formulas for big data?

Power Query handles ETL and data shaping before loading to the model, which reduces workbook calculation load and improves reliability. It complements, rather than replaces, formulas.

Power Query handles data prep before formulas, improving performance.

When should I move to a database or BI tool?

If data volumes regularly exceed Excel’s practical limits, or if multi-user access and advanced analytics are needed, consider a database (SQL) or BI solution like Power BI for scalable reporting.

Move to a database or BI tool when data grows beyond Excel's comfort zone.

Are there quick wins to improve performance today?

Yes. Reduce full-column references, remove unnecessary sheets, convert formulas to calculated columns in the Data Model, and load data via Power Query instead of direct worksheet formulas.

Yes—trim data, limit references, and use the Data Model and Power Query.

Watch Video

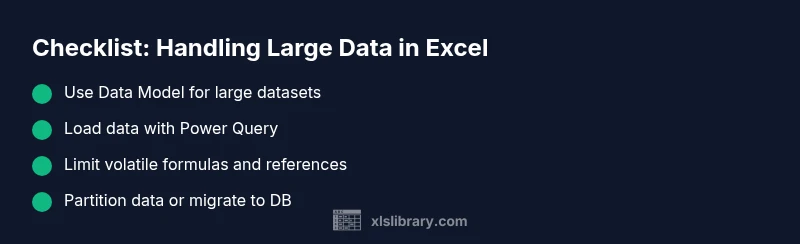

The Essentials

- Understand Excel's row/column limits and memory implications

- Move large data into the Data Model and use Power Query for ETL

- Limit volatile formulas and avoid full-column references

- Consider partitioning data or migrating to a database/BI tool for scale