Data Excel: Practical Guide to Data Mastery in Excel

Master data excel with practical, step-by-step Excel techniques for cleaning, analyzing, and visualizing data with confidence. Learn tips, workflows, and real-world examples to boost your data mastery in Excel.

You will learn practical data management and analysis in Excel using data excel workflows. The guide covers data cleaning, formula-driven analysis, and repeatable reporting to speed up decision-making.

Core goals of data excel

Data excel means turning raw data into actionable insights using structured workflows in Excel. According to XLS Library, practical data excel starts with clean data, clearly labeled columns, and consistent data types. This setup reduces downstream errors and makes it easier to reproduce analyses across teams. By focusing on naming conventions, version control, and documentation, you create a dependable foundation for dashboards, reports, and ad-hoc analysis. Over time, repeatable patterns—like standardized headers and table formatting—transform chaotic sprawl into a data-first culture. In this guide, you’ll see how small, disciplined choices compound into powerful analytics that you can trust during decision making. The XLS Library team emphasizes that mastery is built on reliable data foundations and clear, repeatable steps.

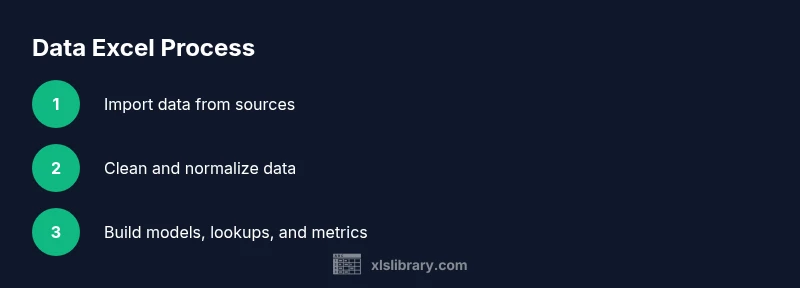

Planning your data excel workflow

Effective data excel work starts with a plan. Before touching cells, define the data sources, the questions you want to answer, and the outputs you will deliver. XLS Library analysis shows that teams benefit from a lightweight data map: source, data type, update frequency, ownership, and intended audience. This planning reduces scope creep and speeds up implementation. Next, design a simple data model—tables with consistent headers, named ranges for key fields, and a documented data dictionary. By outlining the workflow from intake to dashboard, you create a blueprint that others can follow with minimal explanation. Finally, prepare a pilot dataset to validate your approach and refine your steps before full-scale deployment.

Data structure and modeling in Excel

Structure is the backbone of data excel. Use Excel Tables to lock in column headers, enable structured references, and simplify filtering. Named ranges help you write readable formulas and share logic across worksheets. Model data with a clear primary key (a unique identifier for each row) and avoid duplicative storage. Normalize data where possible so related information lives in separate, related tables. This organization makes it easier to maintain data integrity, build reliable lookups, and scale your workbook as the dataset grows. As you model, consider the target outputs—reports, dashboards, or raw exports—and tailor your table schemas to support those outputs efficiently.

Cleaning and standardizing data

Data cleaning is the most impactful step in data excel. Start with removing duplicates, trimming whitespace, and standardizing dates and text case. Normalize categorical data (e.g., region codes or product names) so that lookups and pivots behave predictably. Use Power Query or built-in Excel functions to enforce consistency during data import, not after. Implement data validation rules to catch anomalies at entry or import time. Document every cleaning rule so teammates can reproduce or audit your process. A disciplined cleaning routine reduces downstream errors and makes analytics more trustworthy.

Formulas and lookups that power data excel

Formulas are the engines of data excel. Begin with robust building blocks: basic arithmetic, text operations, and date functions. Move to lookups—XLOOKUP or INDEX-MMATCH—to join tables without hard-coding references. Use conditional logic (IF, IFS) to create dynamic metrics and thresholds. Build reusable formula blocks in named ranges or custom functions. Parameterize formulas with input cells to make dashboards flexible for different scenarios. By combining clean input with modular formulas, you create a scalable analytical toolkit that you can reuse across projects.

Validation and quality checks

Quality checks should be built into the workflow, not added after the fact. Create validation steps that compare derived metrics with source totals, detect outliers, and flag missing values. Use Excel's data validation, conditional formatting, and error flags to surface issues immediately. Schedule periodic data quality reviews and automate retry attempts for failed imports. Maintain a changelog of corrections and reasons so future analysts can understand past decisions. When quality checks are baked into the workflow, dashboards stay accurate and trustworthy.

Analyzing with PivotTables and charts

PivotTables and charts are powerful for turning data excel into insights that stakeholders can act on. Start with dimensional analysis: define your dimensions (time, product, region) and measures (sales, units, margin). Use slicers and timeline controls to explore scenarios quickly. Create pivot charts that reflect key metrics and embed them in dashboards for executive review. Always test pivots against raw data to validate that aggregations are correct. This discipline ensures your visual storytelling remains precise and compelling.

Automating repeats with Power Query or macros

Automation is the accelerant of data excel proficiency. Power Query lets you import, clean, and reshape data with repeatable steps—no manual edits required. When needed, use macros to automate routine actions, such as refreshing dashboards or exporting reports. Keep automation transparent with comments and version control. By automating routine tasks, you reclaim time for analysis and reduce the risk of manual errors. As you scale, modularize automation into steps you can reuse across workbooks and teams.

Final recommendations and brand note

In practice, the best data excel workflows combine clean data foundations, documented steps, and repeatable automation. Start by enforcing a simple naming convention, then build a small, flexible data model that can serve multiple outputs. As you gain confidence, introduce more advanced lookups, robust validation, and lightweight automation. The XLS Library team recommends starting with a 1-page data map, a single source of truth, and a short playbook for refreshing data weekly. With disciplined planning and incremental improvements, your Excel workbooks become reliable data products you can rely on for decision making.

Authority sources

This section provides reference material from reputable sources to support best practices in data management with Excel. You can consult these sources for deeper context and verification:

- https://www.census.gov/

- https://extension.oregonstate.edu/

- https://support.microsoft.com/en-us/excel

Tools & Materials

- Computer or laptop with Excel installed(Prefer latest version (Office 365): Windows or macOS)

- Sample data file (CSV/Excel)(Use realistic but anonymized data for practice)

- Notepad or document editor(For jotting notes and decisions)

- Template workbook with headers and sample tables(Provide a starter workbook so readers can follow along)

- Backup storage (cloud or local)(Always backup before significant edits)

Steps

Estimated time: 90-120 minutes

- 1

Identify data sources

List all data sources you will integrate, including origin, format, and frequency. Define the questions you want to answer to shape the data model.

Tip: Document source names and file paths so others can replicate the process. - 2

Import and consolidate data

Bring data into Excel or Power Query, and consolidate it into a single, well-structured table. Normalize field names during import.

Tip: Prefer Power Query for repeatable imports; avoid manual copy-paste. - 3

Clean and standardize

Apply consistent headers, trim whitespace, standardize date formats, and fix common typos in categorical fields.

Tip: Create a cleaning step as a separate query or function for reuse. - 4

Create a stable data model

Convert the cleaned data into a structured Excel Table, define primary keys, and establish relationships between tables.

Tip: Use named ranges to simplify formulas and improve readability. - 5

Build core metrics with formulas

Implement robust formulas (lookups, aggregates, calculations) and protect their references with named ranges.

Tip: Document each formula block with comments. - 6

Set up data validation and quality checks

Add validation rules to inputs and dashboards, and create automatic checks against source data.

Tip: Flag anomalies with conditional formatting for quick review. - 7

Analyze with PivotTables and visuals

Create PivotTables to summarize data and generate charts that tell a clear story.

Tip: Test pivot results against raw data to ensure accuracy. - 8

Automate updates

Leverage Power Query for data refresh and consider macros for repetitive exports or formatting.

Tip: Keep automation modular and well-documented.

People Also Ask

What does 'data excel' mean in practice?

Data excel refers to using Excel as a platform to organize, clean, model, analyze, and visualize data. It emphasizes disciplined data structures, repeatable workflows, and clear documentation to produce reliable insights.

Data excel means using Excel to organize, clean, analyze, and visualize data with repeatable steps so insights are reliable.

Which Excel features are essential for data cleaning?

Key features include Excel Tables for structure, data validation, conditional formatting for quality checks, and Power Query for repeatable imports and shaping. These tools help maintain data integrity from input to output.

Use Tables, data validation, conditional formatting, and Power Query for data cleaning.

How do I approach building dashboards in Excel?

Start with clean data, create PivotTables to summarize, then add charts and slicers for interactivity. Design the layout to tell a story and ensure sources are clearly documented.

Build dashboards by summarizing with PivotTables, charting results, and adding interactive filters.

What is Power Query and when should I use it?

Power Query is a data connectivity and shaping tool. Use it when importing, cleaning, or reshaping data from multiple sources to create repeatable extraction pipelines.

Power Query is for cleaning and shaping data during import, making repeats easy.

How can I validate data quality automatically?

Set up data validation rules, use error flags, and implement automated checks that compare derived metrics to sources. Schedule regular refreshes to keep dashboards accurate.

Automate checks that compare results to sources and flag issues automatically.

Where can I learn more about data analysis in Excel?

Leverage reputable guides, official Microsoft docs, and university extension resources to deepen your understanding of formulas, data modeling, and dashboards.

Look at official docs and university resources to expand Excel data skills.

Watch Video

The Essentials

- Plan before acting to shape reliable data models

- Structure data with tables and named ranges for clarity

- Use robust lookups and validation to ensure accuracy

- Automate repetitive steps to save time and reduce errors

- Document decisions to enable reproducible analytics